Cloud-native architecture is one of the most important shifts in modern software engineering, because it changes the way applications are designed, deployed, scaled, secured, and maintained across distributed environments. Instead of treating the cloud as just another hosting platform, cloud-native systems are intentionally built to take advantage of cloud characteristics such as elasticity, automation, scalability, resilience, and on-demand infrastructure provisioning. This approach allows applications to evolve continuously, recover automatically from failures, handle fluctuating workloads, and operate efficiently across global regions while supporting rapid development and frequent updates without service downtime.

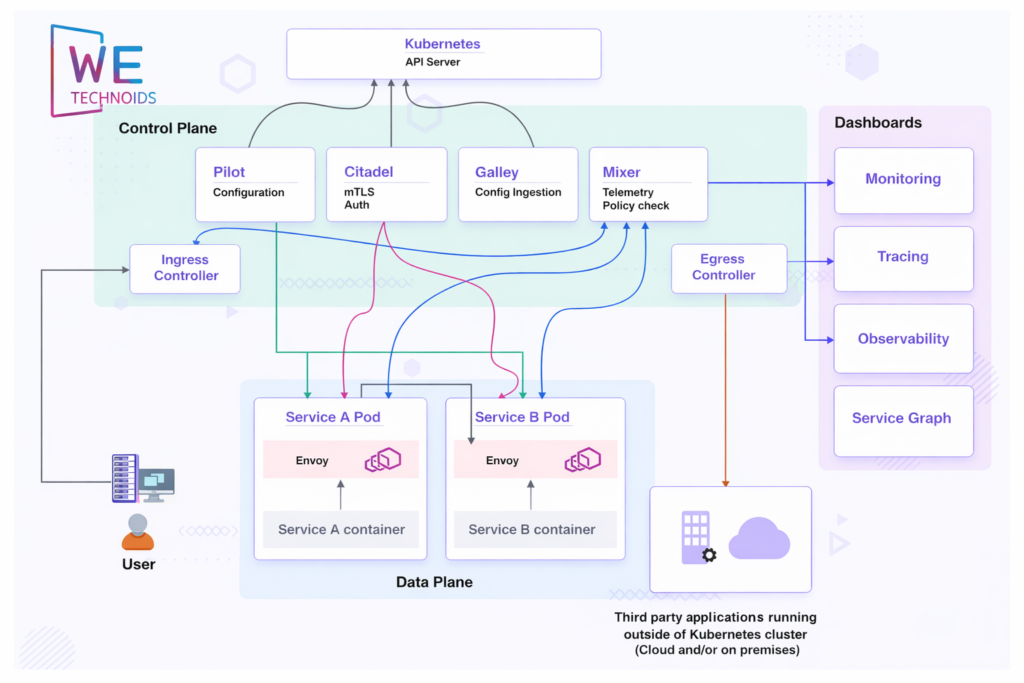

Traditional applications were built as large monolithic systems, where all features, modules, and services existed inside a single deployable unit. While monoliths are sometimes simpler to manage at small scale, they become difficult to modify, update, or scale once the system grows. A single bug could affect the entire application, deployments required large releases, and scaling required adding more hardware to one large server rather than distributing load across smaller services. Cloud-native architecture addresses these limitations by breaking systems into independent, modular components that can evolve separately reducing risk, improving reliability, and enabling teams to innovate faster.

In a cloud-native environment, software is designed with the expectation that infrastructure can fail, traffic levels may fluctuate unexpectedly, user demand may spike rapidly, and new features must be delivered continuously. Instead of reacting to failures manually, cloud-native systems are built to self-heal, auto-scale, and recover through redundancy, automation, and orchestration. Development teams shift from managing servers to defining system behavior declaratively, allowing infrastructure and workloads to adjust dynamically in response to real-time conditions.

What Cloud-Native Architecture Really Means

Cloud-native architecture is not just a technology stack it is an engineering mindset. It means designing applications specifically for cloud environments rather than migrating traditional systems to virtual machines. A cloud-native system is modular, adaptive, distributed, resilient, and automated by default. The application is composed of multiple small services that communicate through APIs or messaging systems, and each service is deployed as its own unit, allowing it to scale, update, or restart without affecting the rest of the system.

A key aspect of cloud-native architecture is loose coupling. Services are intentionally designed so that they do not depend heavily on one another at runtime. This prevents cascading failures and allows one service to fail or restart without shutting down the entire application. Instead of scaling the whole application at once, only the specific services under heavy load are scaled. This results in better performance, improved resource utilization, and reduced operational cost.

Cloud-native architecture also embraces automation as a core foundation. Infrastructure provisioning, deployment, scaling, monitoring, and recovery are all handled through automated processes rather than manual intervention. This leads to faster development cycles, fewer human errors, and the ability to release updates frequently with minimal disruption to users.

Core Components of Cloud-Native Architecture

Microservices Architecture

In cloud-native systems, applications are divided into multiple microservices, where each service handles a specific business capability such as authentication, payments, analytics, notifications, or user management. Every microservice is developed, deployed, and scaled independently, which allows development teams to work in parallel and release features faster. If one service needs additional processing power, only that service is scaled instead of scaling the entire application. This reduces infrastructure cost and improves performance efficiency.

However, microservices introduce distributed complexity. Debugging becomes more challenging because requests travel across multiple services, networks, and containers. Teams must design services carefully, avoid excessive coupling, and implement strong communication patterns to maintain stability.Key points developers should consider:

• Microservices improve modularity and independence

• Each service can use different languages or frameworks

• Failures are isolated instead of system-wide

• Over-splitting services can create unnecessary complexity

Containers - Consistent Runtime Across Environments

Containers are one of the building blocks of cloud-native architecture because they package an application along with its dependencies, runtime libraries, and environment configuration into a portable execution unit. This ensures that the application behaves the same in development, staging, and production environments, eliminating “works on my machine” issues.Containers are lightweight compared to virtual machines and start up much faster, making them ideal for dynamic scaling and automated deployments. They also support versioning, rollbacks, and immutable releases, improving deployment reliability and security.Important advantages include:

• Consistent runtime behavior across systems

• Faster startup and deployment cycles

• Easier rollback in case of failures

• Stronger isolation without heavy virtualization

Containers enable predictable, repeatable, and portable deployment workflows.

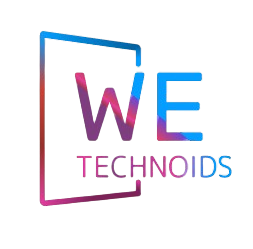

Kubernetes & Container Orchestration

Kubernetes is the orchestration engine that powers most cloud-native platforms. Instead of manually managing servers and containers, Kubernetes automatically schedules workloads, distributes traffic, restarts failed services, and scales applications up or down depending on demand.Kubernetes treats infrastructure as a cluster rather than separate machines. When a node fails, workloads are automatically rescheduled to healthy nodes. When traffic increases, Kubernetes creates additional container replicas. During deployments, rolling updates are performed without shutting down the application.Key capabilities provided by Kubernetes include:

• automated deployment and scaling

• self-healing container runtime

• load balancing and service discovery

• resource management and scheduling

• declarative configuration through YAML manifests

Kubernetes enables organizations to operate highly reliable, large-scale distributed systems.

DevOps, CI/CD & Continuous Delivery Culture

Cloud-native architecture depends heavily on DevOps practices, where development and operations teams collaborate closely instead of working in isolation. Continuous Integration and Continuous Deployment (CI/CD) pipelines automate code testing, building, security checks, and production releases, allowing teams to deploy updates more frequently and with less risk.Instead of shipping large version releases, cloud-native applications are updated through small, incremental changes. Techniques such as blue-green deployments, canary releases, and progressive rollouts ensure that new features are tested gradually in production before full rollout.Core benefits of CI/CD in cloud-native systems include:

• faster feature delivery

• reduced deployment risk

• automated rollback when issues occur

• higher code quality and stability

Observability - Monitoring Distributed Cloud Systems

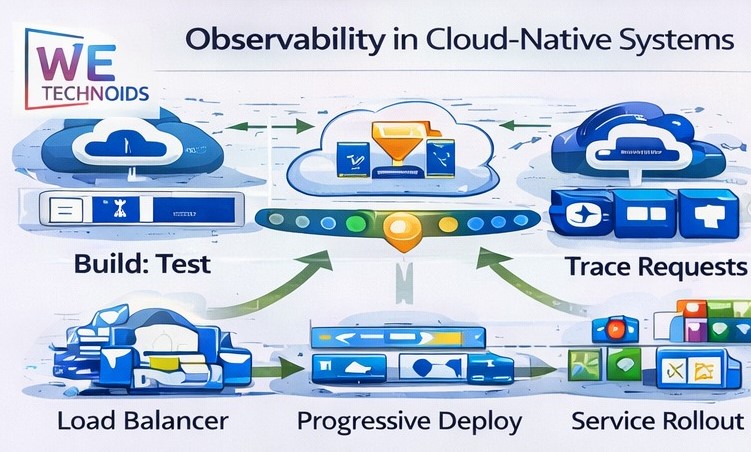

Because cloud-native architectures operate as distributed systems, observability is essential for understanding how services behave in real-time. Observability is more than logging it includes metrics, traces, and performance analytics that help teams detect failures, isolate performance bottlenecks, and analyze system health.Logs help track application events and errors. Metrics provide quantifiable system insights such as CPU usage, latency, and throughput. Distributed tracing allows developers to follow a single user request as it passes through multiple services, helping identify where delays or failures occur.Observability makes large, complex systems manageable by improving visibility and operational confidence.

Real-World Use Cases

Cloud-native architecture is widely adopted across industries because it supports rapid innovation and scalable system growth. SaaS applications rely on multi-tenant cloud environments to serve thousands or even millions of users across regions. Financial and fintech platforms use microservices and event-driven systems to process secure, real-time transactions. E-commerce platforms benefit from auto-scaling, allowing systems to handle seasonal traffic surges without downtime.

Streaming platforms, data analytics pipelines, AI applications, and IoT ecosystems also depend on cloud-native systems because they require high availability, distributed compute resources, and continuous processing of large data streams.Across all of these domains, cloud-native architecture enables:

• global performance

• business scalability

• rapid feature release cycles

• improved fault tolerance

• better infrastructure utilization

It allows organizations to innovate without infrastructure limitations.

Challenges & Architectural Trade-Offs

Although cloud-native architecture is powerful, it is not automatically simple or risk-free. Moving from a monolithic application to microservices introduces complexity in networking, communication, security, deployment, and debugging. Teams must manage service dependencies, version compatibility, failure isolation, and inter-service latency.Operational overhead also increases in the early stages because organizations must invest in automation pipelines, monitoring systems, Kubernetes clusters, security rules, and architecture governance. Without proper planning, cloud costs can increase and systems may become overly fragmented.

The key challenge is balance cloud-native systems require strategic planning, skilled engineering teams, and disciplined architecture decisions.

The Future of Cloud-Native Architecture

The future of cloud-native architecture is expanding toward serverless computing, event-driven design, edge computing, platform engineering, autonomous infrastructure, and AI-assisted operations. Instead of developers managing low-level infrastructure, platforms will increasingly self-optimize resource allocation, performance tuning, and security enforcement.Cloud-native development will shift toward:

• serverless workflows

• WebAssembly and lightweight runtimes

• edge-distributed deployments

• internal developer platforms

• automated cost optimization

• intelligent DevOps practices

The long-term direction is toward systems that are more autonomous, scalable, and application-centric, reducing operational burden while increasing innovation capacity.